What is OER? In short, OER adds intelligence to the network by looking at the current state of the network and injecting routing information to choose the most optimal path. It’s pretty cool if you think about it. All our routing protocols basically select a path based on static information, and they don’t care about the current state of the network. For example, OSPF will prefer a gigabit link over a FastEthernet link, even if the Gigabit link is 100% congested and the FastEthernet link is doing absolutely nothing.

OER can look at the current state of the interfaces and select the best exit path. It can do this by looking at the delay, response time (IP SLA), utilization of the link, but also the MOS (Mean Opinion Score) so that it can select the best path for VoIP traffic. Optimized edge routing and Performance routing got me hooked because I believe this is what the future of networking will look like. To demonstrate why OER could be useful in your networks, I want to show you a couple of scenarios:

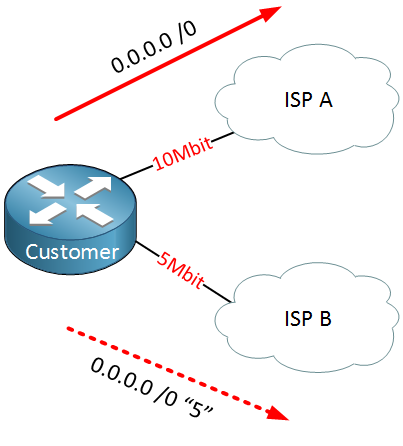

Above, you see a customer router that is connected to two ISPs. ISP A offers a 10 Mbit link and is the primary link. ISP B offers a 5 Mbit link, which is the backup link. Our ISPS don’t want to run BGP with us, so we can use a routing protocol or static routes for connectivity. The customer is using two static routes for connectivity:

- 0.0.0.0 /0 with the default administrative distance of 1 for ISP A.

- 0.0.0.0 /0 with an administrative distance of 5 for ISP B.

The default route for ISP B is called a “floating static route” because it will only show up in the routing table if the default route to ISP A is removed from the routing table. This configuration will work, but the downside is that we only use the link to ISP A. In fact, we have 15 Mbit (10 Mbit + 5 Mbit from both ISPs), but we can only use 10 Mbit now. What if you wanted to use both links? Maybe you are thinking about changing the default route so the administrative distance is equal, and we can do 50/50 load balancing.

This might sound like a good idea, but technically it’s not possible. On Cisco routers nowadays, we use CEF (Cisco Express Forwarding), and despite what most people believe, it doesn’t do load balancing but load sharing. The difference is that load balancing means that the “load” is shared equally on both links…load sharing means we use both links, but it’s not balanced. When we have two equal entries, CEF will load share based on the flow’s source and destination IP address. For example, let’s say we have two default routes with the same administrative distance in the topology above, and we have the following sessions:

- A computer with source IP address 1.1.1.1 connecting to a web server with destination IP address 4.5.6.7, we’ll call this “flow 1,” and It’s consuming about 500kb/sec.

- A server with source IP address 2.2.2.2 connecting to a remote backup server with destination IP address 8.9.10.11, we’ll call this “flow 2,” and it’s consuming about 4Mbit / sec.

CEF will put flow 1 on the link to ISP A and flow 2 on the link to ISP B. We did “load sharing,” but it’s not balanced at all. The link to ISP A now has about 5% utilization (500 kb/sec out of 10Mbit), while the link to ISP B has about 80% utilization (4 Mbit out of 5Mbit).

CEF really doesn’t look at load balancing…it would be difficult to configure true load balancing in the scenario above, right? Using OER, this is no problem at all!

Let me show you another scenario:

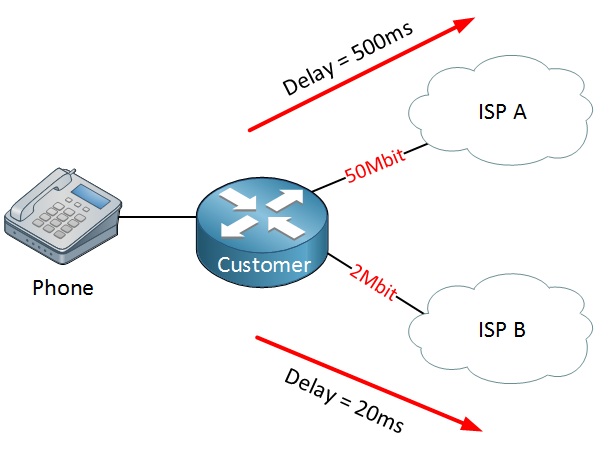

Above, we have the same network, but we are using VoIP. The link to ISP A is 50 Mbit, and the link to ISP B is 2 Mbit. Since the link to ISP A has a higher bandwidth, we are using this as the primary link. Unfortunately, the link has a very high delay at the moment (500 ms), so it would be better to use the backup link to ISP B at this moment since it only has a delay of 20 ms. If you wanted to, you could configure something like this using policy-based routing in combination with IP SLA, but it’s not a very good solution. OER can check the delay of our interfaces and automatically reroute VoIP traffic over another interface when it meets certain criteria.

Let’s make it even more complex…

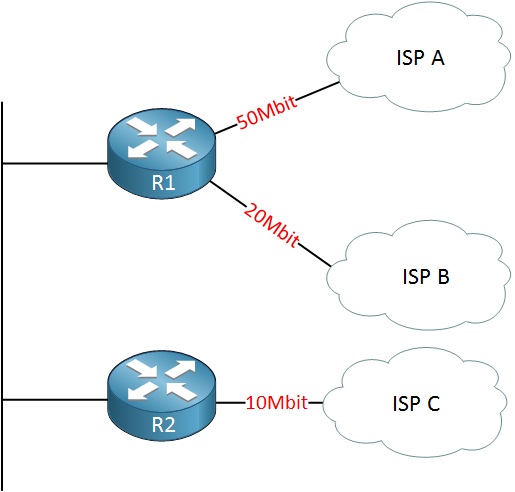

What about the topology above? We have two routers, and there are three links to different ISPs. We have a 50, 20, and 10 Mbit link for a total WAN bandwidth of 80 Mbit. Is there any way to load balance traffic on these links? It’s impossible because R1 and R2 have no idea about each other’s interfaces. They don’t know each other’s utilization, delay, or anything else. Now let’s talk a bit about OER and how it adds more intelligence to the network.

Hi Rene,

I am just wondering if you have seen this technology deployed in any production networks

It sounds like a predecessor of SDN and with a great potential, but I am not sure if it has been widely deployed

Thanks,

C

Hi C,

I haven’t seen this in production networks before. The idea behind it is pretty cool, one “controller” that determines what paths to use for the network. OER however on IOS 12.4 was pretty buggy, it’s better on IOS 15 as PFR (Performance Routing).

SDN goes one step further…it’s also about configuring the network.

Rene

Hi Rene,

Any plans to do a topic on Pfr version 3 or IWAN?

Hi Purushotham,

I probably might add some extra Pfr material since it’s on the CCIE written exam, it’s not in the lab anymore though. About IWAN…I’d have to check what is included. I do have quite some DMVPN material.

Rene

Hi Rene,

I feel many concepts easy when I hear it from you. I will wait for your material on Pfr.

Thank you for the response.